For the past few weeks, a persistent rumor has been spreading through the Claude user community. Opus 4.6, Anthropic’s flagship model, seems less sharp than before. Slightly less precise responses, less consistent reasoning, rate limits hit much faster. Nothing official, but the sentiment is very widely shared. And now, in parallel, a certain Claude Opus 4.7 has just been spotted in the API’s internal references. Coincidence, or an assumed strategy?

This week also revealed a more discreet but equally significant project: Anthropic is reportedly working on a full-stack development studio, directly integrated into Claude. Enough to position it against the current Lovable and Replit offerings. A roundup of a busy week, between accusations of a silent nerf, controversy over token tax, and preparations for a future developer ecosystem.

Claude Opus 4.7 leak: was Opus 4.6 deliberately nerfed to make way for it?

The starting point is a widely shared feeling. For several weeks, Opus 4.6 users have been reporting the same observations. Responses seem less sharp than before. Reasoning holds up less well over time. Overall quality appears to have slipped a notch. On premium plans like Max, rate limits are triggered much faster than at the model’s launch.

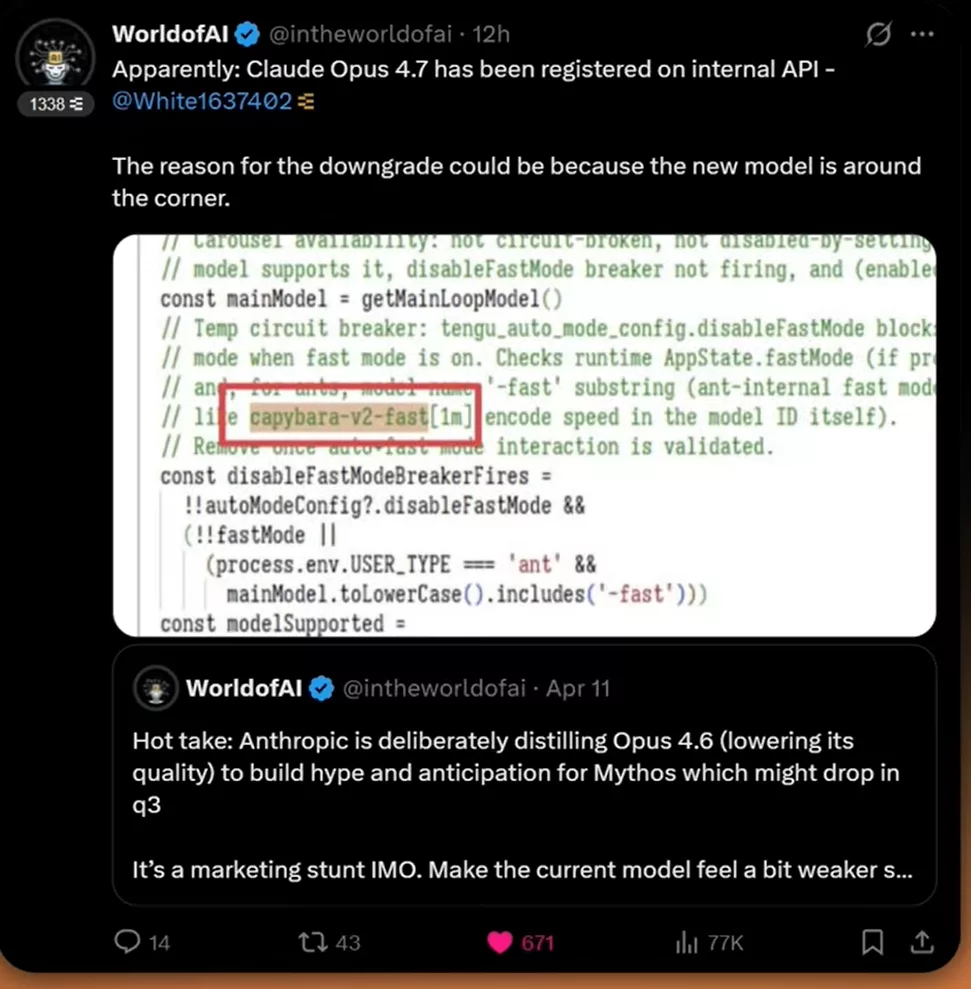

A hypothesis is circulating increasingly openly on X. Anthropic would have deliberately distilled Opus 4.6 — in other words, lowered its quality — to pave the way for the next generation. The idea is that if the current model seems slightly less brilliant, the one coming behind it will appear all the more impressive at launch. A well-known marketing mechanic in the industry. The leak of Claude Opus 4.7 in the API references only adds fuel to the fire.

Nothing has been officially confirmed by Anthropic. There is no communication that would prove a deliberate downgrade. But the sentiment is dense enough to fuel every technical thread. Between those who talk about a real nerf and those who suggest more of an infrastructure rebalancing, the line is thin. In any case, trust has slightly cracked — and that’s where the debate gets interesting. On this topic, a recent episode involving Anthropic with two major leaks in five days had already put the company under pressure.

What matters here is that the user pays a subscription for a level of performance they no longer consistently get. When Claude Code is expensive and quotas run out within hours instead of lasting the day, frustration builds fast. Doubt about model stability then becomes a commercial problem, not just a technical one.

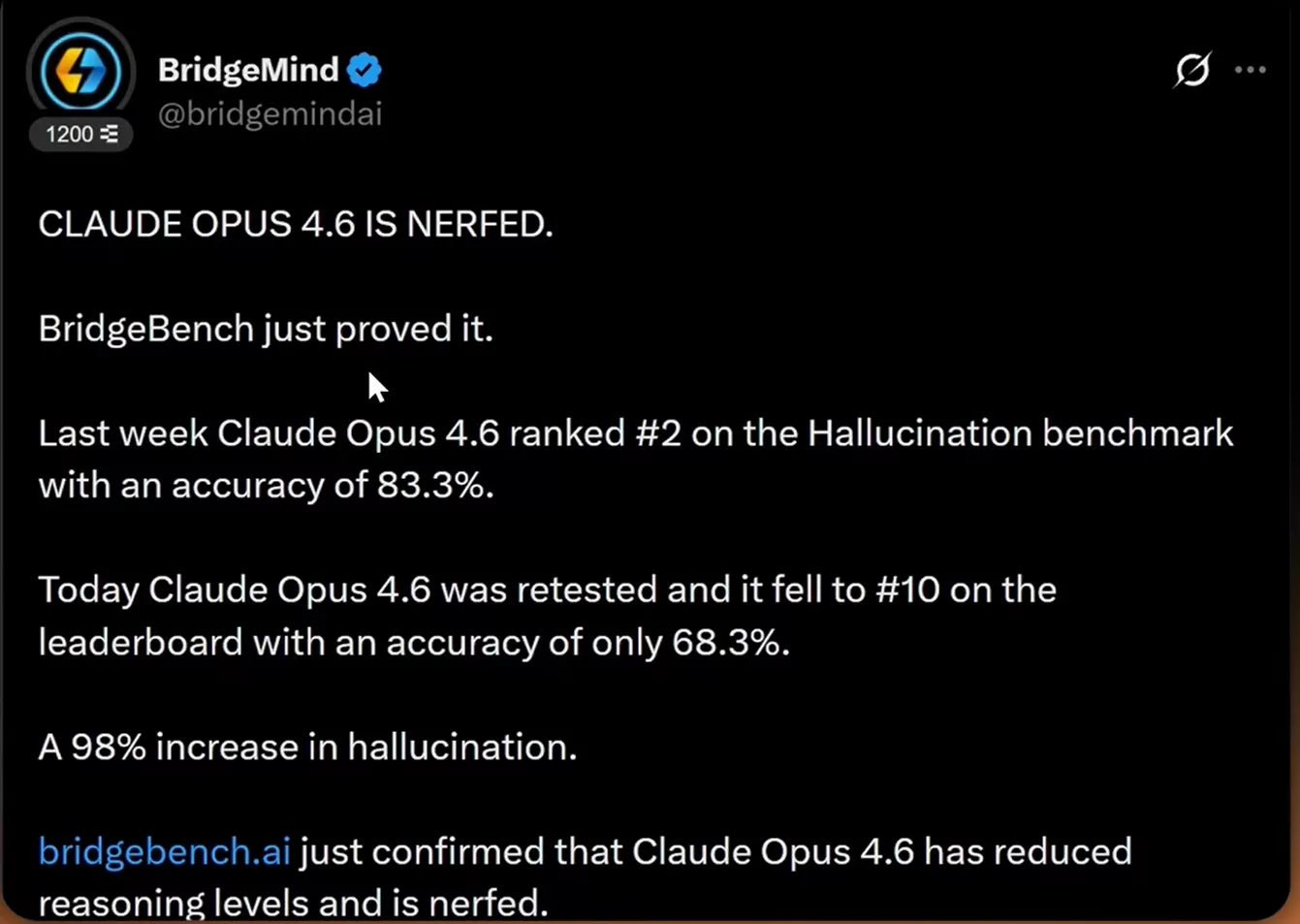

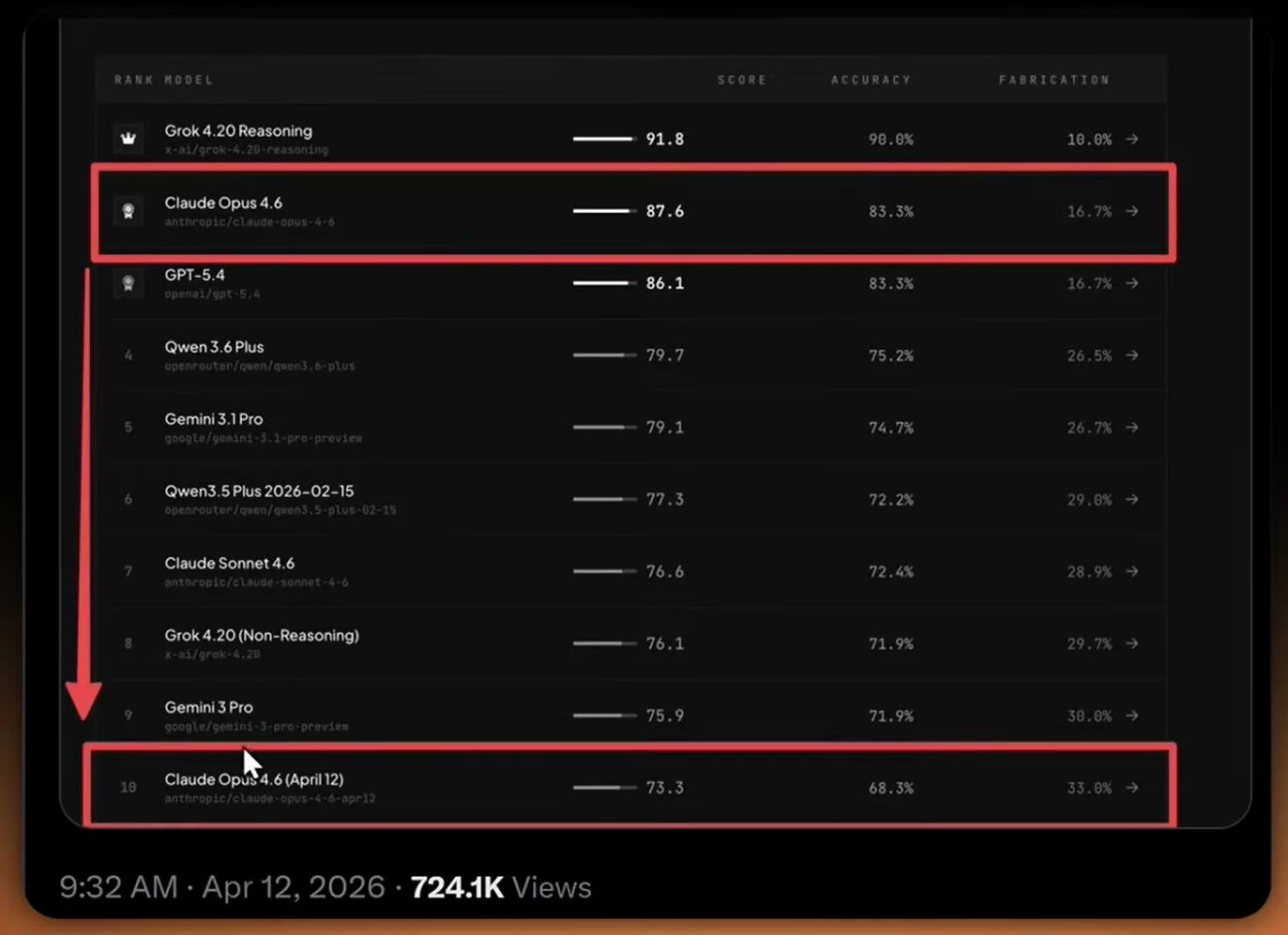

A benchmark that confirms the drop

Until now, everything rested on user perception. But a benchmark has just changed the picture. The BridgeBench platform retested Opus 4.6 this week, and the result sharply diverges from the previous measurement. Last week, the model was ranked second on their hallucination benchmark, with an accuracy of 83.3 percent. A very strong score, consistent with the model’s reputation.

After the retest, Opus 4.6 crashed to tenth place in the rankings. Accuracy fell to 68.3 percent. Fifteen accuracy points lost in a single week, on a model presented as stable. The number is brutal. It matches user reports and gives concrete weight to the silent nerf theory. Even if the methodology of a single benchmark does not constitute absolute proof, the coincidence is too striking to ignore.

The debate connects to a broader question about AI reliability, as explored by researchers who identified the H neurons at the root of hallucinations. When a hallucination score takes such a steep dive in a week, the question stops being technical and becomes editorial. Users want to understand what is happening.

Claude Opus 4.7 already spotted in internal code

This is the discovery that may explain everything else. Claude Opus 4.7 was spotted in the internal references of the Anthropic API. This type of detection typically occurs shortly before a public release. The pattern is well known across all major labs. The identifiers of the next model appear in logs, then in technical documentation, before any official announcement.

If this leak is confirmed, it directly connects the two pieces of the puzzle. A resource shift from Opus 4.6 toward Claude Opus 4.7 would explain the performance drops observed on the older model. This is not necessarily a deliberate nerf — it may be an infrastructure trade-off. The GPUs that were serving to guarantee Opus 4.6 stability may be in the process of being redirected toward the training or deployment of Claude Opus 4.7.

This reading is probably the most realistic one. Anthropic constantly optimizes its costs and infrastructure. Preparing the launch of a new flagship model requires trade-offs. The previous model often bears the consequences, especially when quotas are shared. The episode of the Claude Mythos leak a few weeks ago had already shown how thoroughly Anthropic’s roadmap leaks through the cracks.

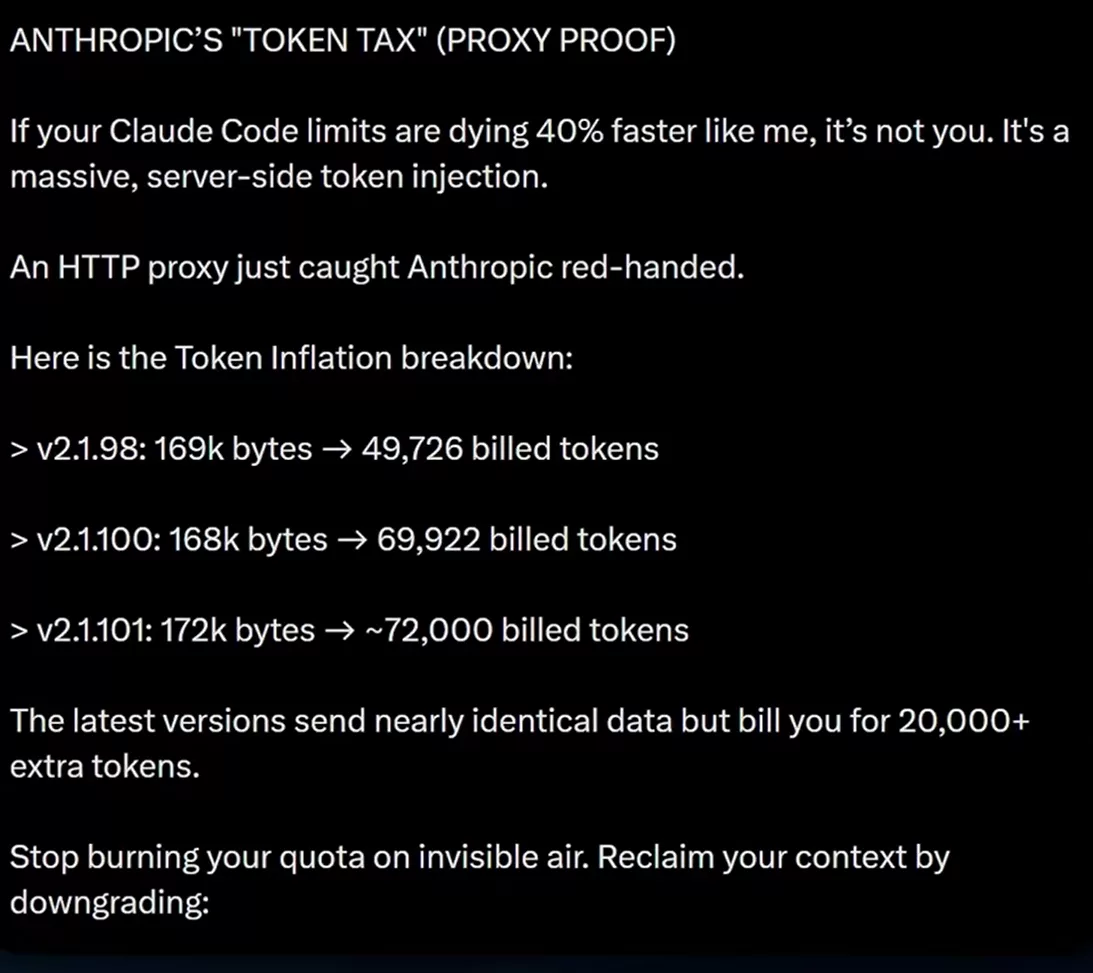

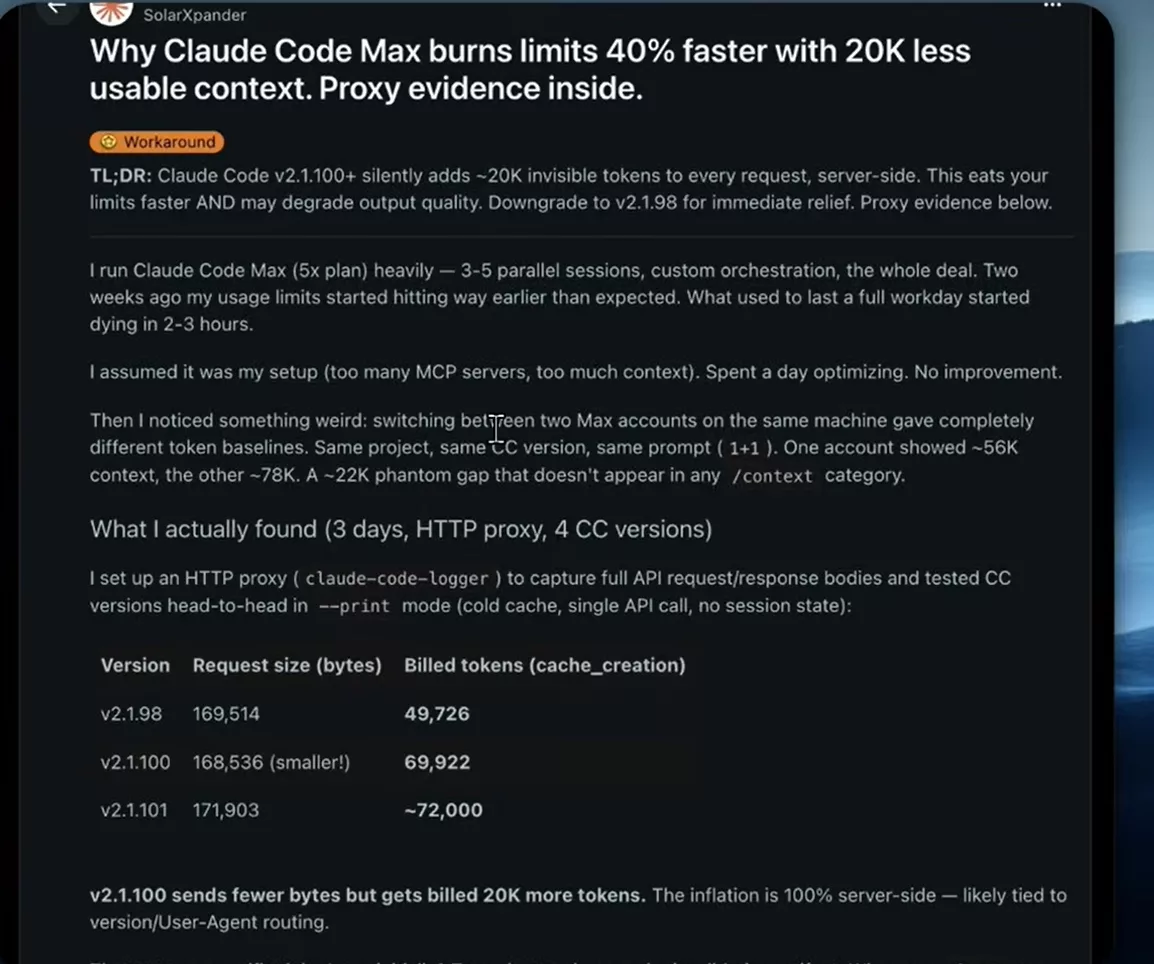

The token tax blowing past limits on Claude Code

Another sensitive issue surfaced this week. Several developers are now talking about a token tax applied by Anthropic on Claude Code. The story starts with an HTTP proxy that captured requests sent by recent versions of the tool. The finding is unambiguous. Newer versions inject a significant volume of additional tokens server-side, even when the user input remains virtually identical.

The figures put forward suggest around 20,000 additional tokens per request. With heavy usage, the bill climbs very fast. This is exactly what would explain why users hit their limits far sooner than before, even on the most expensive plans. The intent behind these tokens is not yet clear. It could be a larger system prompt, security metadata, or an expanded context system.

Nothing has been officially confirmed by Anthropic yet. But the network trace is solid enough that the discussion is not dying down. In technical threads, multiple engineers are sharing their own captures and arriving at the same order of magnitude. This forced transparency is probably one of the most interesting side effects of the phenomenon. The more AI tools embed themselves in workflows, the more their network behavior gets scrutinized.

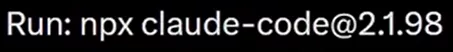

For affected users, a workaround already exists. It is possible to downgrade Claude Code to an earlier version, before this behavior appeared. The command fits on a single line and rolls the client back to a more token-efficient release.

The command npx claude-code@2.1.98 lets you switch to version 2.1.98, considered clean by part of the community. It is a temporary fix, not a lasting solution, but it eases the financial strain while waiting for an official response from Anthropic. This situation is a reminder of how important it is to test the real limits of Claude Code yourself rather than relying on announcements.

A full-stack studio signed Anthropic in the works

Alongside these controversies, Anthropic is preparing a considerably more ambitious project. The company is reportedly working on an AI Studio-type platform, modeled after Google AI Studio. The promise is clear: offer a complete full-stack vibe coding environment directly connected to Claude. Build an application, test it, deploy it — all without leaving the Anthropic ecosystem.

If confirmed, this move would officially shift Anthropic from the role of model provider to that of development platform. Direct competition would then be against Lovable and Replit, two players who currently dominate this segment of AI-assisted development. The difference is that Anthropic would arrive with its own native model, without any third-party abstraction layer. A decisive technical edge if the experience is well designed.

This strategy connects to another quiet announcement. Claude Code desktop is receiving an update that finally unifies the interface and allows working across multiple repositories within a single instance. That kind of detail is not trivial for developers juggling several projects in parallel. Combined with the Managed Agents line, the whole thing is starting to look like a genuine AI workstation, as already hinted by the Claude Managed Agents beta announcement.

Claude for Word enters beta on Microsoft Word

The last significant announcement of the week: Anthropic has begun rolling out Claude for Word in beta. The integration works directly from the Microsoft Word sidebar. Draft, edit, and revise a document without ever leaving the application, all driven by Claude. Changes appear as tracked revisions, exactly like those from a human colleague.

That detail matters more than it might seem. Until now, working with Claude on a long document meant copy-paste back-and-forth, with the formatting loss that comes with it. Native integration in Word removes that friction. Formatting is preserved, revision history remains readable, and the document keeps its identity. For now, the feature is limited to Team and Enterprise plans. But the signal is clear. Anthropic wants to embed Claude inside the productivity tools where users already spend their day, rather than imposing yet another interface on top.

0 Commentaires

Aucun commentaire pour le moment. Soyez le premier à commenter !