Anthropic held its « Code with Claude » conference this week, entirely focused on AI agents and the future of software development. The announcements were substantial: new multi-agent workflows, doubled usage limits for Claude Code, and a compute partnership with SpaceX to handle the demands of intensive agentic workloads. And at the heart of it all — Claude infinite context, the capability that may redefine what an AI coding assistant can actually do.

What really stands out is the direction Anthropic is taking for the next generation of models: Claude infinite context, advanced multi-agent orchestration, and persistent long-term reasoning. The stated goal is unambiguous — Claude should be far more than a chat assistant, and should function as a genuinely autonomous software engineering system.

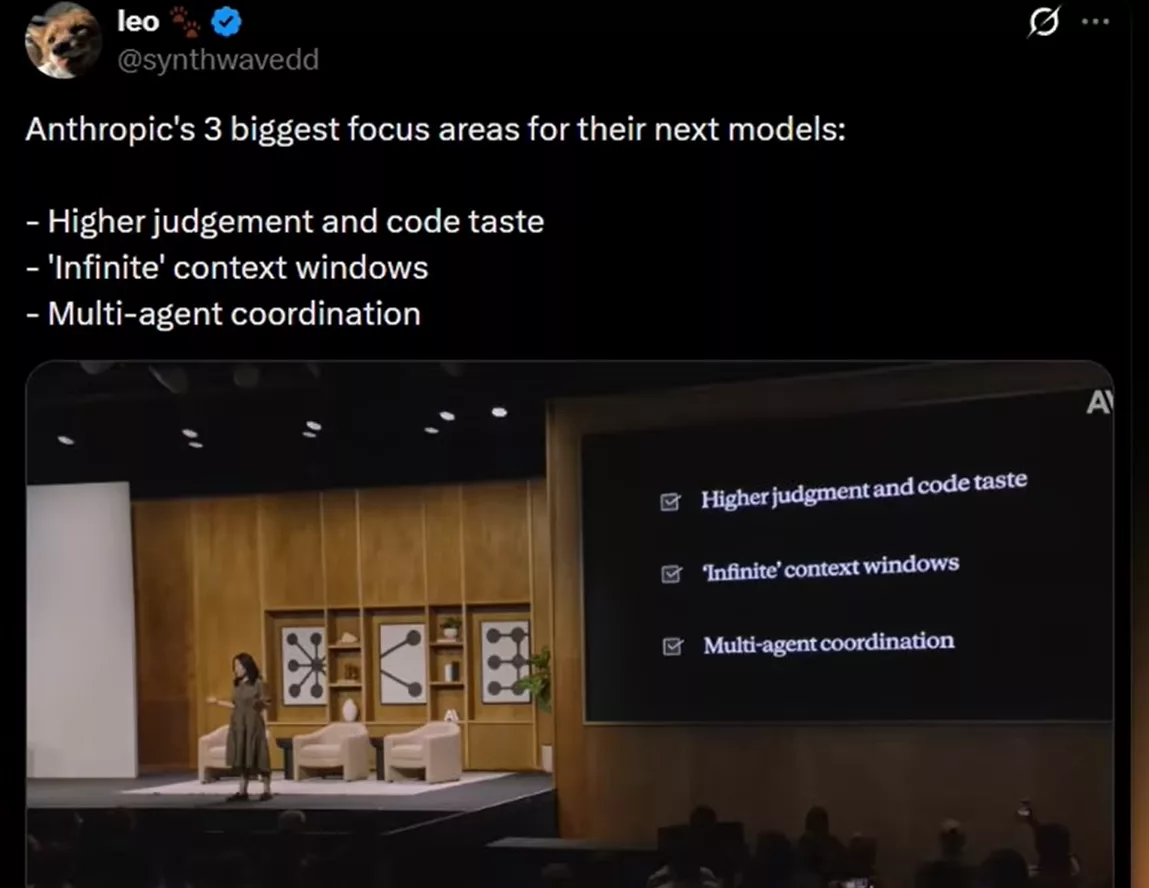

Three pillars for the next generation of Claude

At the conference, Anthropic laid out three core priorities for their next flagship model — whether that’s the Claude 5 family or the Mythos system already referenced in several recent leaks. Three concrete axes, not marketing fluff.

Code taste, Claude infinite context and parallel execution

First axis: improving engineering judgment and « code taste ». The goal is no longer chasing benchmarks, but training a model that genuinely understands system architecture, thinks about maintainability, and reasons like a senior developer.

Second axis: Claude infinite context — a memory window that never saturates. In practice, an agent could hold an entire repository, multiple active projects, past sessions, and external tool data in mind simultaneously, without ever losing the thread.

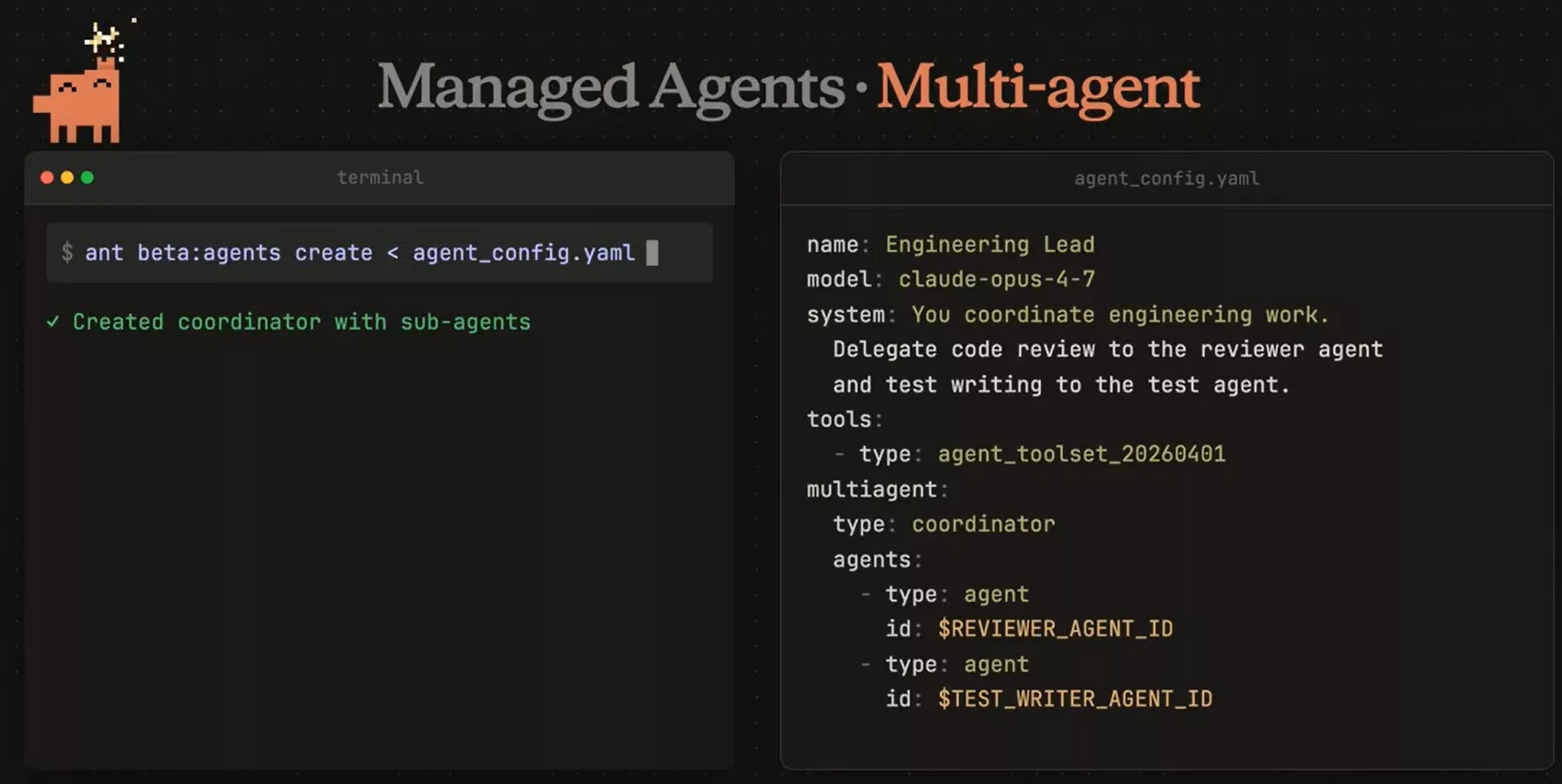

Third axis: multi-agent orchestration. A lead agent delegates tasks to specialized sub-agents running in parallel — frontend, backend, debugging, research — all coordinated in real time.

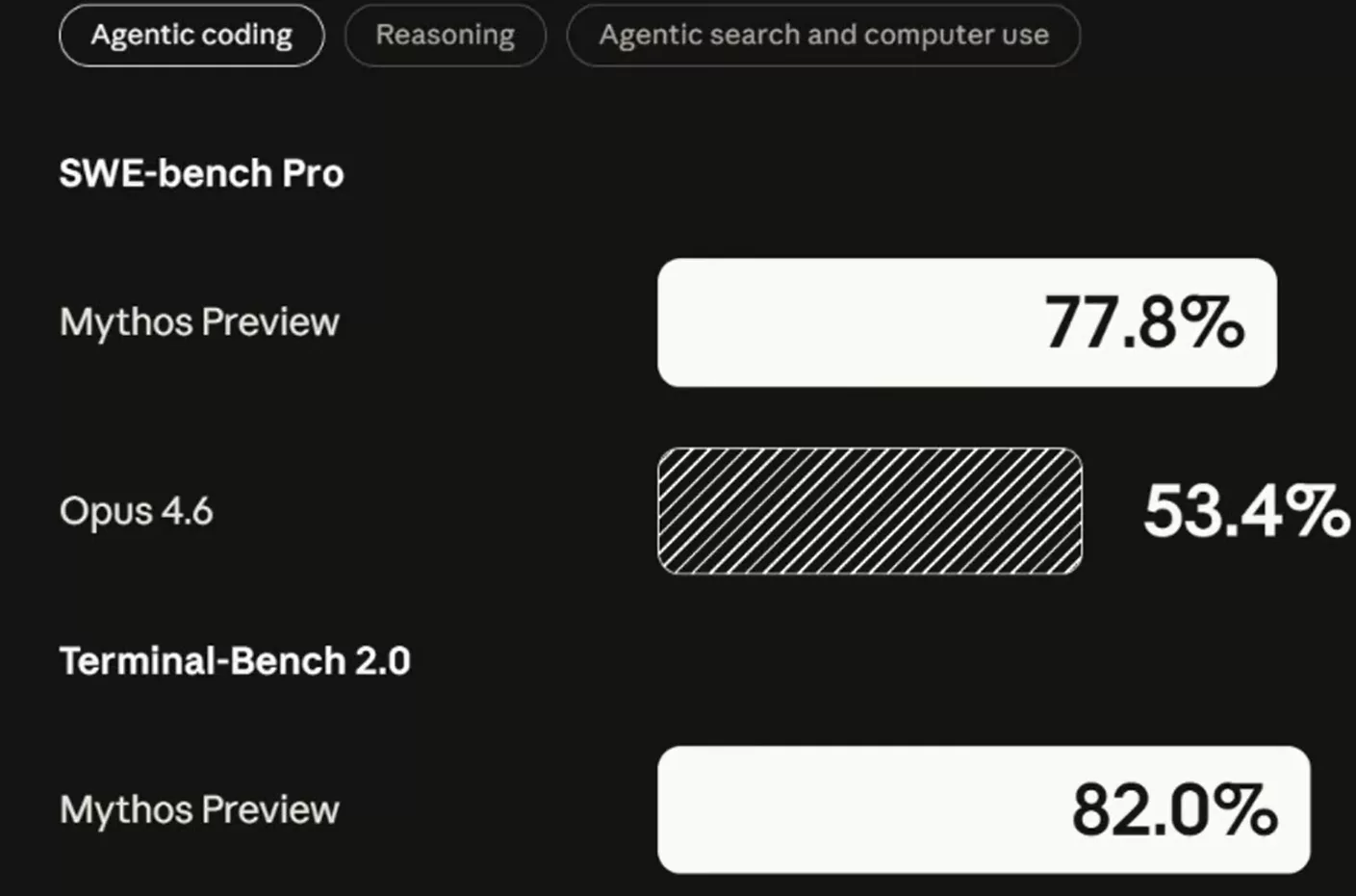

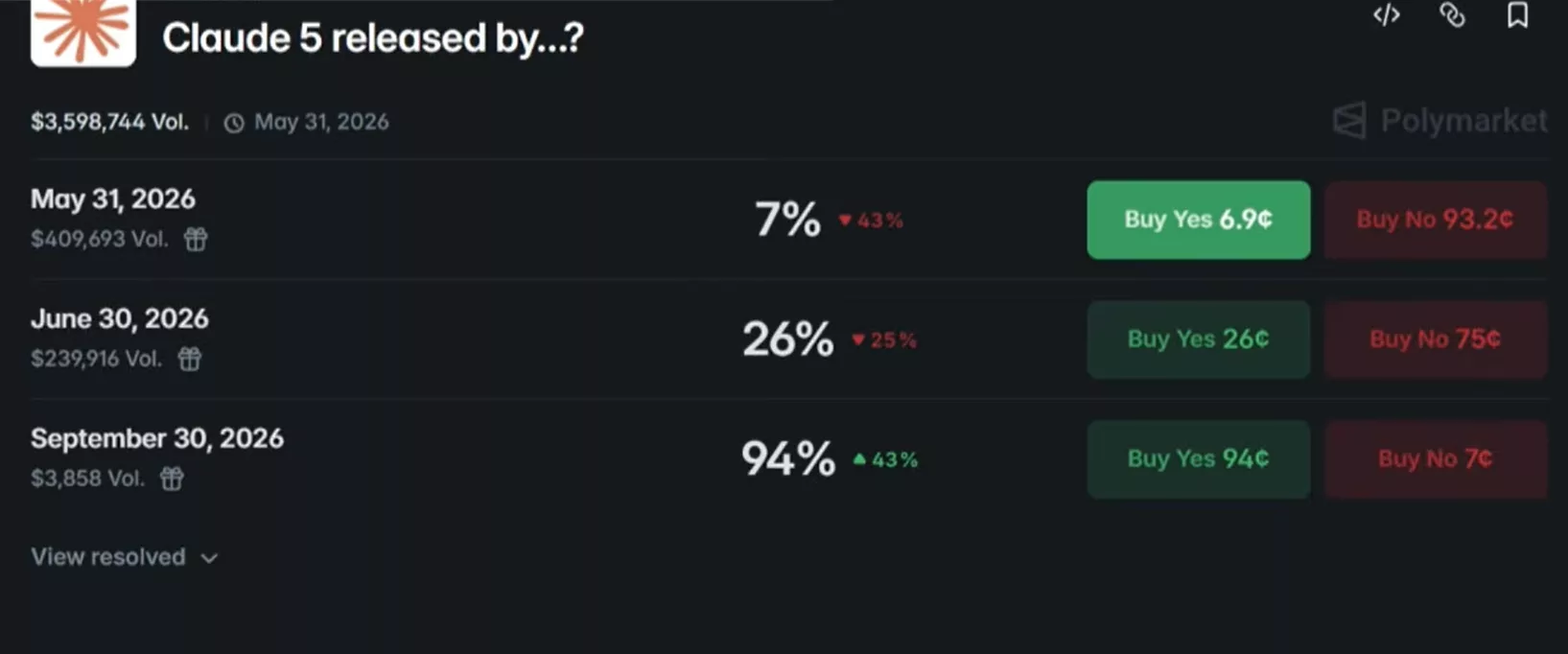

On the performance side, the early numbers circulating around the Mythos preview are already striking. On SWE-bench Pro and Terminal-Bench 2.0, Mythos pulls a clear lead over Opus 4.6 — roughly 25 points ahead. As for timing, Polymarket speculation is in full swing: a September release looks more realistic than a May or June launch.

If Anthropic manages to combine all three pillars — code taste, Claude infinite context, and multi-agent orchestration — the result would be a one-person dev team that never loses context and delegates intelligently. Sonnet and Haiku haven’t been updated in a while, which suggests a version bump for both models could follow soon, as early hints spotted in Anthropic’s codebase already suggested.

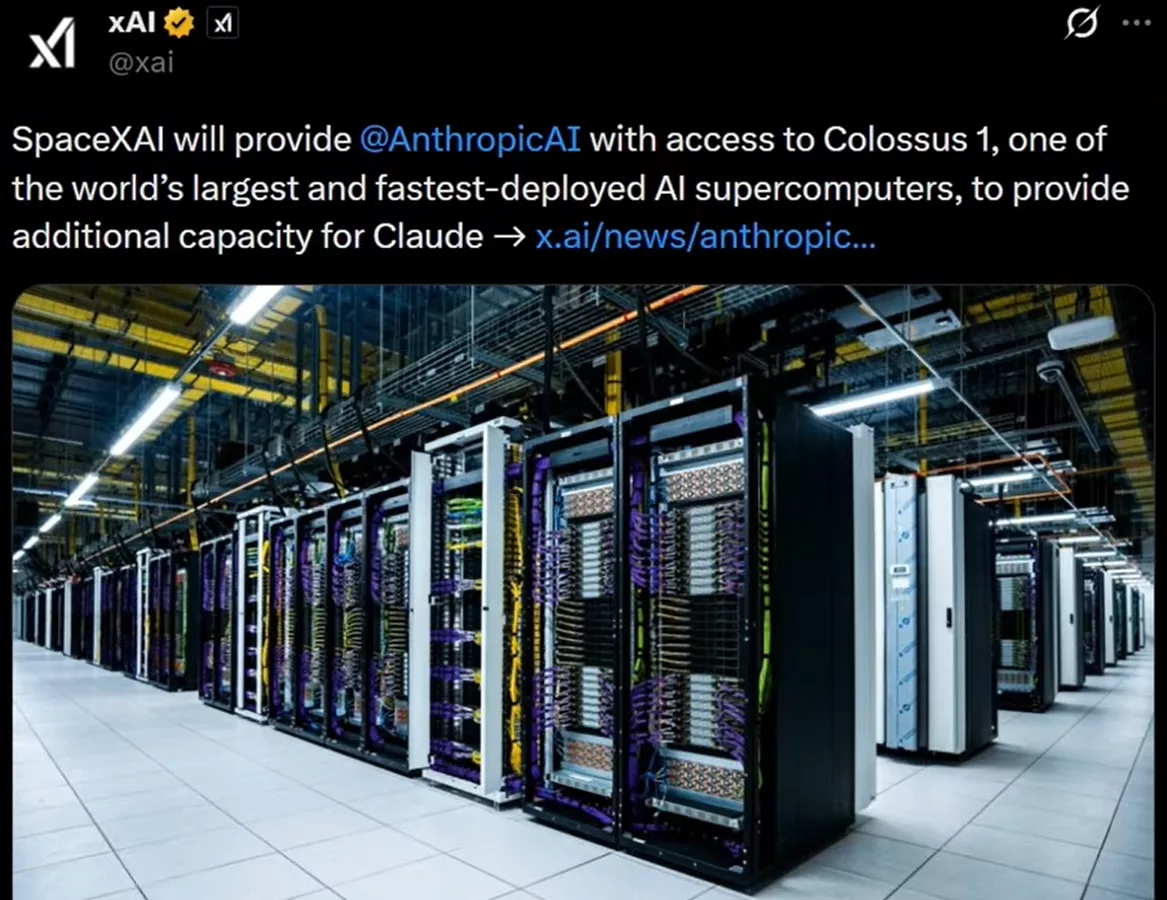

Doubled limits thanks to the SpaceX deal

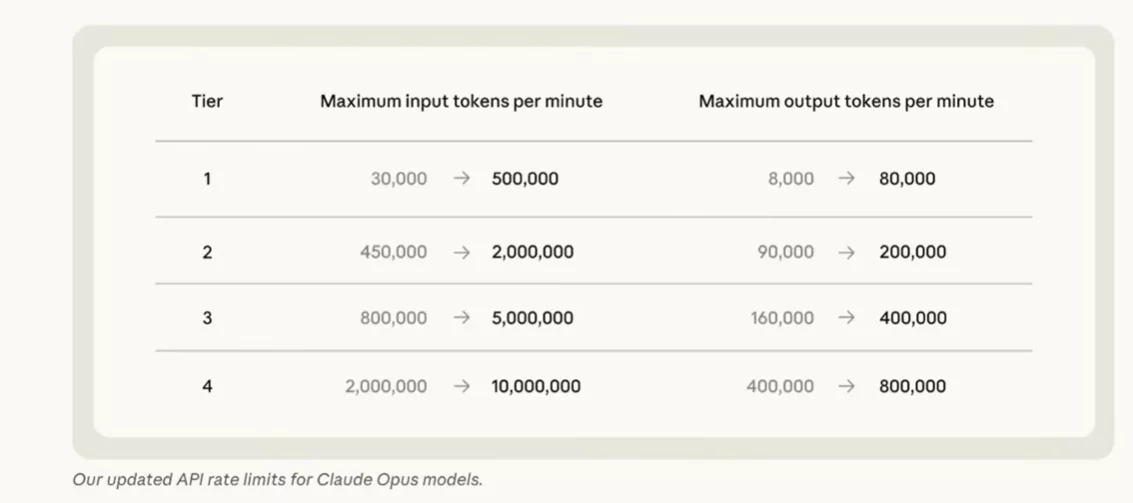

Anthropic has doubled Claude Code usage limits across all paid plans (Pro, Max, Teams and Enterprise). The 5-hour usage cap is now twice as high, and the capacity reduction during peak hours — which penalized Pro and Max subscribers — has been removed. API rate limits for Claude Opus models, already benchmark leaders, have also been significantly raised.

This jump is made possible by a deal signed with SpaceX. Anthropic now has access to the full capacity of the Colossus 1 data center — over 300 megawatts of compute power and more than 220,000 Nvidia GPUs, available within the coming month. The company says this new capacity will directly improve availability and performance for all Claude users. And there’s more: Anthropic also revealed several infrastructure partnerships already underway, including a multi-gigawatt agreement involving Google, Amazon, Broadcom, Microsoft, Nvidia, and Fluid Stack.

The most unexpected project remains Anthropic’s mention of developing orbital AI compute infrastructure with SpaceX. Servers in space powering AI — it’s no longer science fiction.

Managed Agents: orchestration, dreaming and webhooks

Anthropic used this update to significantly expand the capabilities of managed agents launched in beta earlier this year. The goal is clear: enabling Claude to handle long, complex tasks in a genuinely autonomous way, without constant human intervention. Combined with Claude infinite context, these agents can now sustain coherent multi-session work across large codebases.

Four key new capabilities

The first major addition is multi-agent orchestration. A lead agent coordinates multiple specialized agents working in parallel. The config file shown alongside sets the tone: an Engineering Lead that delegates code review to a reviewer agent and test writing to a test writer agent.

Anthropic also introduces an outcome loop — a self-correction cycle. The agent evaluates its own outputs against defined criteria, then iterates until it hits the expected quality bar. The dreaming feature follows the same logic: agents can review their past sessions, analyze mistakes, and adjust their behavior over time.

Support for webhooks rounds out the picture. Developers can connect Claude directly to their tools, applications, and automated workflows, without complex configuration.

Taken together, these additions fundamentally change what managed agents are. This is no longer a simple assistant responding to queries — it’s a system capable of operating as a persistent, autonomous team: one that learns, self-corrects, and integrates into real working environments. With Claude infinite context as the backbone, the gap between AI assistant and AI engineer keeps narrowing.

0 Commentaires

Aucun commentaire pour le moment. Soyez le premier à commenter !